Things you should consider regarding client-side performance before releasing a website.

Lately I have been working with websites built a year ago or more and one thing that I always get surprised by is that none of them have implemented any compression or rules for improving client side performance. You might think that these websites are old and we did not look at things like that. But… back in 2010 I was at a conference in USA where they talked about compression of css and js and even though that was 3 years ago I remember the colleagues that I were there with said that this is something we already use and have been for a while. So why are we not implementing simple things like compression at all times since then?

I know that this subject has been written about before but we seem to forget or ignore, Fredrik Vig wrote a great post about this subject a couple of years ago, http://www.frederikvig.com/2011/10/faster-episerver-sites-client-side-performance/

So how is it that we do not implement simple rules and functions to our projects before we release them? By doing such small amount of work you can reach so far into fixing the performance of your website. Sadly most of the time we do not plan for this and therefore we do not have time to implement all of it, we tend to focus on setting up environments with CI or web deploy and many more nice to have features but we obviously forget about performance. But you must add some at least specially in times for Responsive Web Design!

Web Performance Optimazation (WPO)

This can seem like a big and intimidating task but it is actually really easy to apply to your project and the 80/20-rule can be applied, small effort will get 80% of the result.

When RWD became popular this subject became important since mobile devices might use slow connections and more and more people start to access you website from other places then at the office = great internet connection..

Compress/Bundle JS and CSS and finally load them async

Most of our development in js-files and css-files are done in more then one file for easier development. But most of the current browser limit the number of connections to six. That means it will take time to download many resources and resources will be queued while processing the first six requests. By bundling files we can limit the requests. Also a lot of the tools will perform minification on files, deleting whitespace and line breaks, that will reduce file-size.

As I am not a fan of “cool” plugins to VS I suggest using Nuget and install plugins such as Microsoft Web Optimization.

- http://nuget.org/packages/Microsoft.AspNet.Web.Optimization/1.0.0

- http://blogs.msdn.com/b/rickandy/archive/2012/08/14/adding-bundling-and-minification-to-web-forms.aspx

- http://www.asp.net/mvc/tutorials/mvc-4/bundling-and-minification

JavaScript is probably killing your site and making it slow!? Optimizing scripts could take a lot of time so instead of doing that we bundle them and try to load them asynchronously to stop script from blocking the rest of the page. We need to focus on sending less JavaScript, use smaller libraries, especially on mobile devices.

More information about JavaScript loaders:

- http://css-tricks.com/thinking-async/

- http://www.netmagazine.com/features/essential-javascript-top-five-script-loaders

Optimizing images

Working with images has always been a pain when it comes to the web. As a developer I try to make a great website exactly like the Art Director says, sadly editors do not have the same way of thinking when it comes to images and specially the size of them. As a developer working with CMS we need to understand that this might be an issue and write code that will create images with correct size and optimized for the web.

First we need to pick the right format for images: PNG for icons and graphics, JPEG for photos and GIF only for animations (if you still need that).

If you are a developer that also create images, make sure you save them with the lowest tolerable quality. As a developer there are some tools that you can use in Visual Studio that will optimize your local images, for instance, Image Optimizer

Once you have uploaded your images to EPiServer we need to optimize them on output as well. We can then implement a lossless image optimizer like ImageOptim, http://imageoptim.com, or Smush.it, http://www.smushit.com. These tools will squeeze the bytes from the images.

A great tool is the plugin written by Geta and Fredrik Vig in Norway, http://www.frederikvig.com/2012/05/faster-episerver-sites-image-optimization/.

What about using the right size for images both desktop and mobile?

After we are done with optimizing the size of image we need to look into loading images from EPiServer for different resolutions. We need to scale them so they fit our needs. Using larger images then needed is not that great for performance.

In this case I suggest looking into using Adaptive Images, http://adaptive-images.com/. A blogpost has also been written about this, http://blog.huilaaja.net/2013/03/28/adaptive-images/

Test your performance

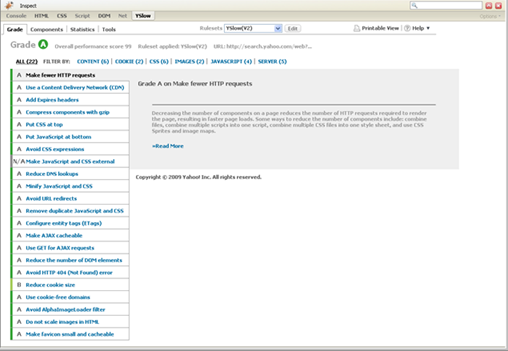

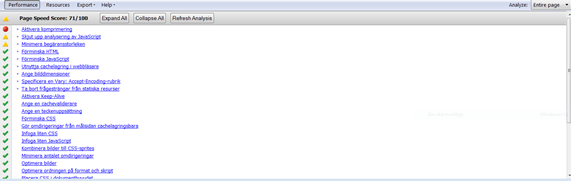

When you are done with all the compression and image optimization you probably would like to test your website. This can easily be done by using plugins to Firebug such as YSlow and Google PageSpeed

Bild: YSlow

Bild: Google PageSpeed

This might become handy when you are a developer but you can also test you website using the online with tools like: WebPageTest (http://www.webpagetest.org/) or Google PageSpeed (https://developers.google.com/speed/pagespeed/)

Summary

There are a lot of improvements that can be done with small amount of both time and effort. The things I have mentioned should be added to every project. Of course we can do so much more but at least it is a start.

- Use a CDN

- Use Sprites for images.

- Embed images as resources in css.

- Add cache to both EPiServer and static files.

- Add Expires headers

- Compress your components using gzip – is very easy in IIS 7 and above.

Resources

- http://www.webpagetest.org/ – “Run a free website speed test from multiple locations around the globe using real browsers (IE and Chrome) and at real consumer connection speeds. You can run simple tests or perform advanced testing including multi-step transactions, video capture, content blocking and much more. Your results will provide rich diagnostic information including resource loading waterfall charts, Page Speed optimization checks and suggestions for improvements.”

- https://developers.google.com/speed/ – Great resource from Google about page speed.

- https://developers.google.com/speed/docs/best-practices/mobile – Things to consider when working with mobile devices.

- http://en.wikipedia.org/wiki/Web_performance_optimization – Definition of WPO and some great links.

Nice one!

Great post!

Nice summary on performance. However here are my 2 cents:

- just remember and think about cases when you will receive a bug report from the client complaining about client-side script error in file.js on line 1, column 589 :) we just have to be able to fallback to unminified when needed.